Overview

Langfuse is an open-source LLM engineering platform that helps teams collaboratively debug, analyze, and iterate on their LLM applications.

Core platform features

Develop

- Observability: Instrument your app and start ingesting traces to Langfuse (Quickstart, Tracing)

- Track all LLM calls and all other relevant logics in your app

- Async SDKs for Python and JS/TS

@observe()decorator for Python- Integrations for OpenAI SDK, Langchain, LlamaIndex, LiteLLM, Flowise and Langflow

- API (opens in a new tab)

- Langfuse UI: Inspect and debug complex logs and user sessions (Demo, Tracing, Sessions)

- Prompt Management: Manage, version and deploy prompts from within Langfuse (Prompt Management)

- Prompt Engineering: Test and iterate on your prompts with the LLM Playground

Monitor

- Analytics: Track metrics (LLM cost, latency, quality) and gain insights from dashboards & data exports (Analytics)

- Evals: Collect and calculate scores for your LLM completions (Scores & Evaluations)

- Run model-based evaluations within Langfuse

- Collect user feedback

- Manually score observations in Langfuse

Test

- Experiments: Track and test app behaviour before deploying a new version

- Datasets let you test expected in and output pairs and benchmark performance before deployiong

- Track versions and releases in your application (Experimentation, Prompt Management)

Get started

Why Langfuse?

We wrote a concise manifesto on this: Why Langfuse?

- Open-source

- Model and framework agnostic

- Built for production

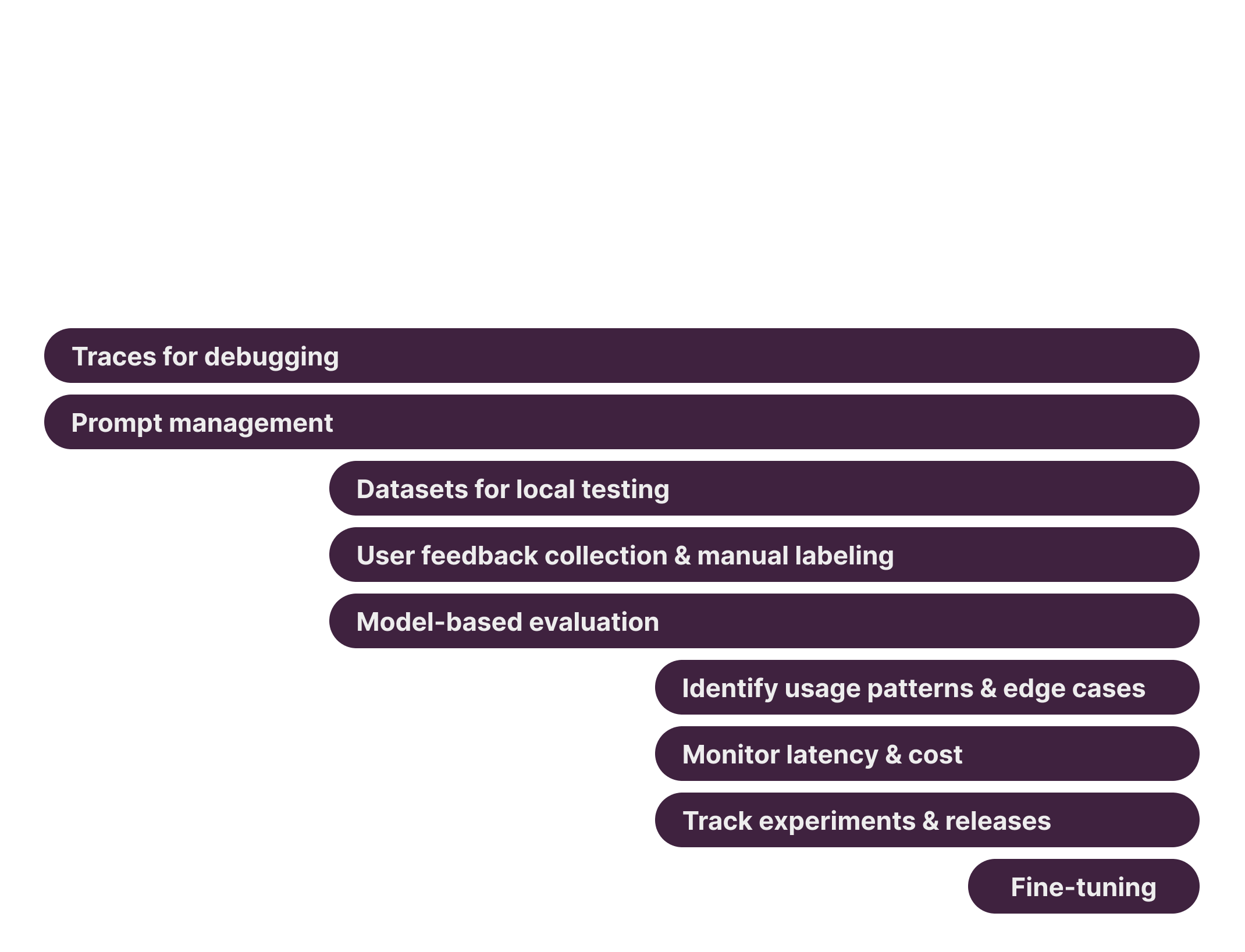

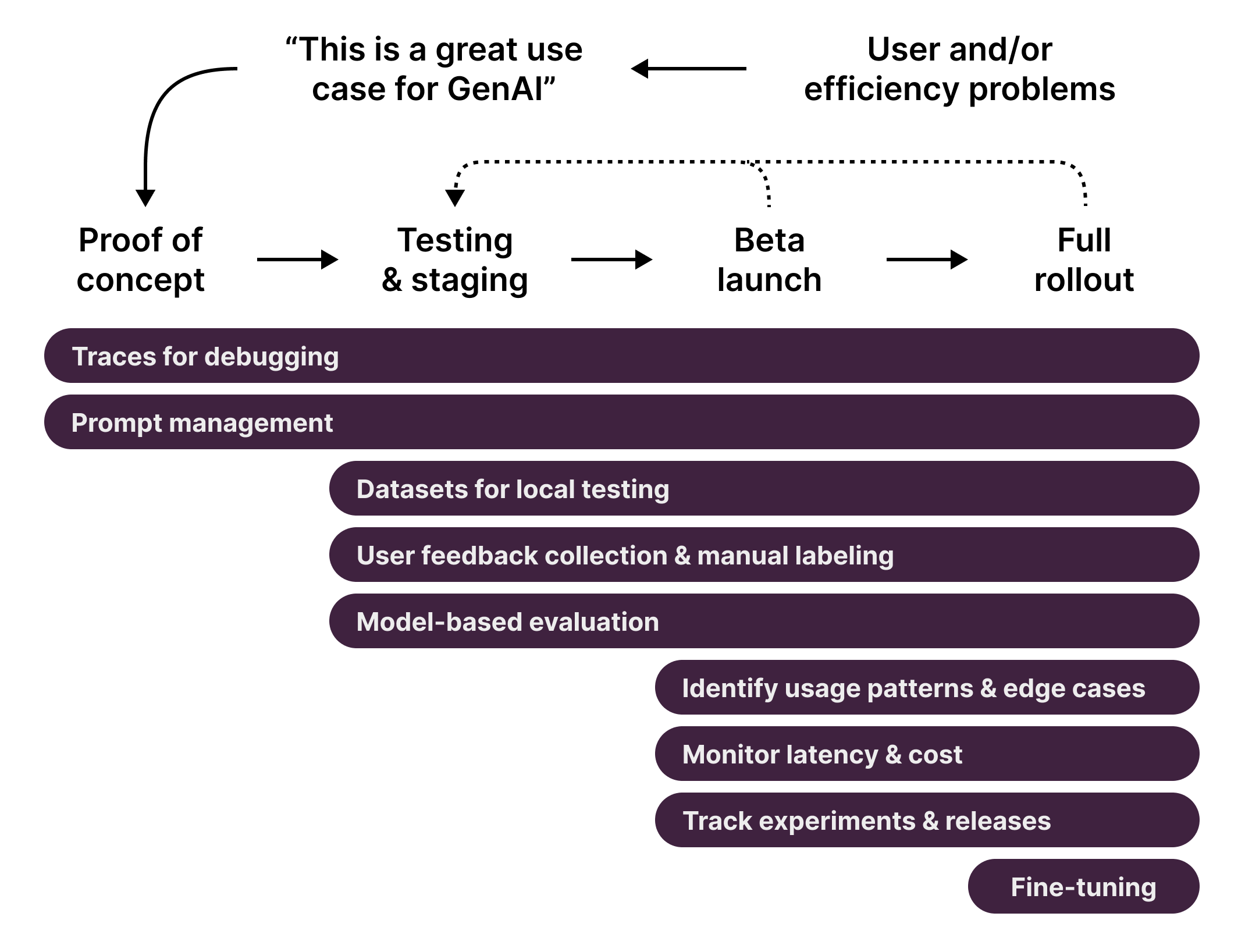

- Incrementally adoptable - start with a single LLM call or integration, then expand to full tracing of complex chains/agents

- Use the GET API to build downstream use cases

Challenges of building LLM applications and how Langfuse helps

In implementing popular LLM use cases – such as retrieval augmented generation, agents using internal tools & APIs, or background extraction/classification jobs – developers face a unique set of challenges that is different from traditional software engineering:

Tracing & Control Flow: Many valuable LLM apps rely on complex, repeated, chained or agentic calls to a foundation model. This makes debugging these applications hard as it is difficult to pinpoint the root cause of an issue in an extended control flow.

With Langfuse, it is simple to capture the full context of an LLM application. Our client SDKs and integrations are model and framework agnostic and able to capture the full context of an execution. Users commonly track LLM inference, embedding retrieval, API usage and any other interaction with internal systems that helps pinpoint problems. Users of frameworks such as Langchain benefit from automated instrumentation, otherwise the SDKs offer an ergonomic way to define the steps to be tracked by Langfuse.

Output quality: In traditional software engineering, developers are used to testing for the absence of exceptions and compliance with test cases. LLM-based applications are non-deterministic and there rarely is a hard-and-fast standard to assess quality. Understanding the quality of an application, especially at scale, and what ‘good’ evaluation looks like is a main challenge. This problem is accelerated by changes to hosted models that are outside of the user’s control.

With Langfuse, users can attach scores to production traces (or even sub-steps of them) to move closer to measuring quality. Depending on the use case, these can be based on model-based evaluations, user feedback, manual labeling or other e.g. implicit data signals. These metrics can then be used to monitor quality over time, by specific users, and versions/releases of the application when wanting to understand the impact of changes deployed to production.

Mixed intent: Many LLM apps do not tightly constrain user input. Conversational and agentic applications often contend with wildly varying inputs and user intent. This poses a challenge: teams build and test their app with their own mental model but real world users often have different goals and lead to many surprising and unexpected results.

With Langfuse, users can classify inputs as part of their application and ingest this additional context to later analyze their users behavior in-depth.

Updates

Langfuse evolves quickly, check out the changelog for the latest updates.

Subscribe to the mailing list to get notified about new major features:

Get in touch

We actively develop Langfuse in open source. Join our Discord, provide feedback, report bugs, or request features via GitHub issues.

Learn more about ways to get in touch on our Support page.